From Data to Value: The AI Journey

Understanding the fundamental transformation of data quality into model reliability and organizational trust. AI is no longer a passive tool; it is an autonomous actor.

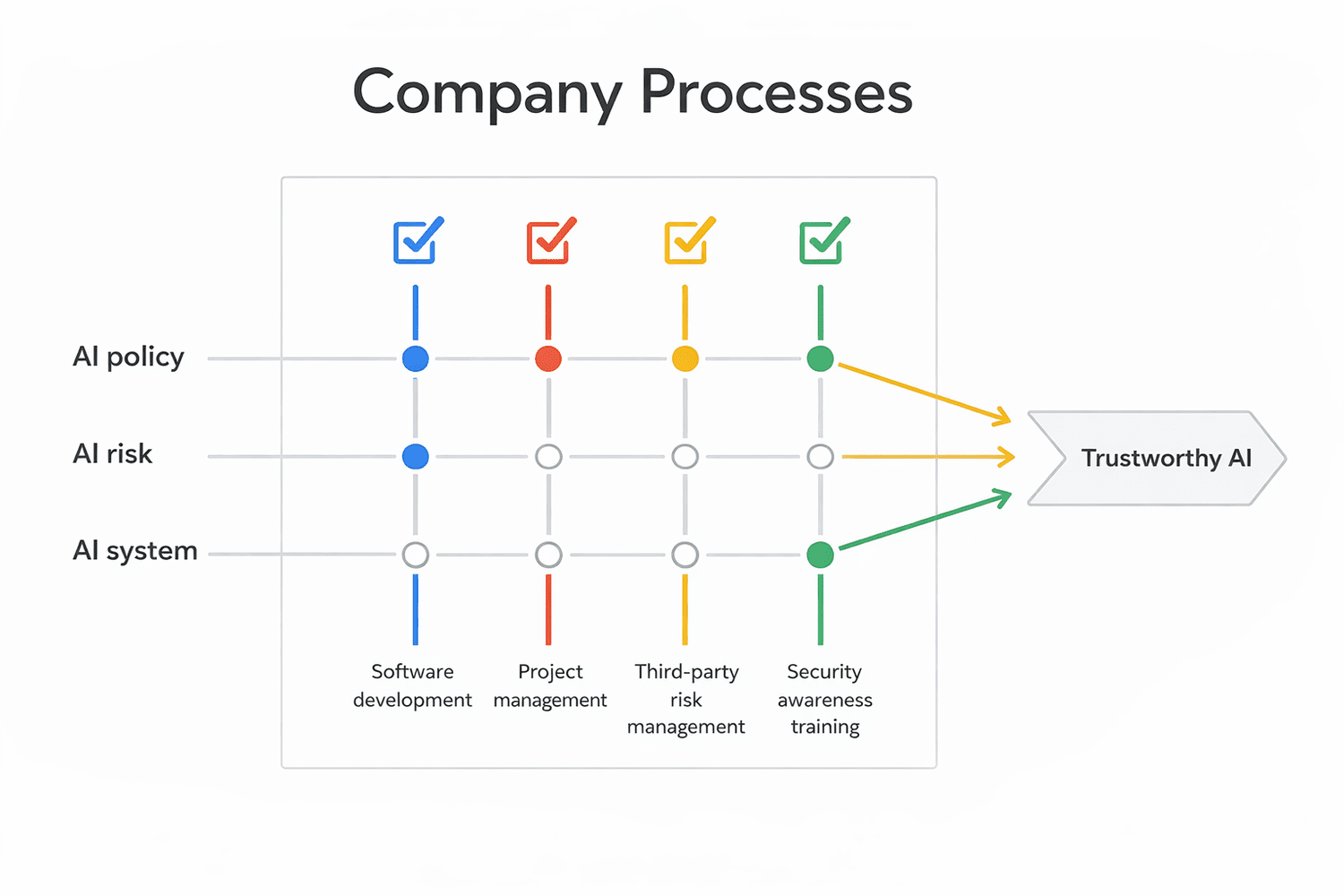

ACADEMIC MODEL: TRUSTWORTHY AI LIFE CYCLES

Organizational Stakeholder Matrix

AI Governance is a cross-functional mission. Each department acts as a "cell" with specific concerns regarding Privacy, Security, and Ethics.

Legal & Compliance

"Ensuring algorithmic explainability for regulatory adherence (EU AI Act) and maintaining IP rights over AI outputs."

Human Resources

"Preventing algorithmic bias in recruitment while protecting employee data privacy in automated monitoring."

IT Infrastructure

"Managing heavy computational resource loads (GPU/TPU) and securing internal/external API integration points."

Cybersecurity

"Defending against adversarial ML threats, prompt injection, and protecting models from inversion attacks."

Finance (CFO)

"Evaluating AI adoption costs vs. ROI while aligning with ESG sustainability and carbon footprint goals."

3rd Party Management

"Auditing supply chain model transparency and ensuring global data residency and vendor compliance."

MIT AI Risk Repository

Deepening Module: Classifying risks by Entity, Intent, and Timing according to the MIT FutureTech initiative (1,700+ classified risks).

Causal Taxonomy

Classifies how and why risks occur.

• Entity (AI vs Human)

• Intent (Accidental vs Malicious)

• Timing (Pre vs Post-Deployment)

Domain Taxonomy

Classifies risks across 7 Domains:

1. Discrimination

2. Privacy & Security

3. Misinformation

4. Malicious Actors

5. Human-Computer Interaction

6. Socioeconomic

7. Safety & Failures

Privacy by Design (PbD)

Ann Cavoukian’s 7 Foundational Principles: Transitioning from reactive tools to proactive architectural defaults.

| Principle | Description |

|---|---|

| Proactive not Reactive | Anticipating and preventing privacy invasive events before they occur. |

| Privacy as the Default | Maximum privacy protection is an automatic setting; no user action required. |

| Embedded into Design | Privacy is an integral part of the architecture, not an add-on. |

| Full Functionality | Positive-Sum approach: Security + Privacy + Utility (Win-Win). |

| End-to-End Security | Full lifecycle protection from ingestion to secure deletion. |

The Quantum-AI Intersection

Understanding why AI-accelerated Quantum compute breaks classical security models through mathematical collapse.

Harvest Now, Decrypt Later

Encrypted AI datasets are being captured today, awaiting future Cryptographically Relevant Quantum Computers (CRQC) to unlock them.

PQC Transition

Transitioning to Post-Quantum Cryptography (PQC) is mandatory to secure the long-term integrity of AI models.

OECD AI Incident Monitor

Analyzing realized harms where AI design or deployment led to direct impact on property, rights, or human life.

| Incident (2026) | Harm Type | Classification |

|---|---|---|

| Operation Epic Fury (Military Strike) | Physical (Death) | AI Incident |

| ClawJacked (Agent Hijacking) | Economic / Privacy | AI Incident |

| Paris Election (Disinfo Campaign) | Reputational / Public | AI Incident |

EU AI Act: Risk Pyramid

The world's first comprehensive legal framework for AI, categorizing systems based on the level of harm they can cause.

EU AI ACT REGULATORY TIERS

❌ Prohibited

Social scoring, manipulative AI, biometric IDs in public.

⚠️ High Risk

Critical infra, recruitment (HR), banking, health. (Audits Required).

Strategic Roadmap: Top 10 Steps

A master plan to align AI functionality with global Privacy and Security standards for organizational resilience.

Discovery

Inventory all "Shadow AI" tools across the org.

Classification

Label projects based on EU AI Act harm tiers.

Steering

Form a cross-functional AI Governance Council.

Policy

Draft the internal AI Ethics & Policy Charter.

Maturity

Benchmark status against NIST AI RMF functions.

Guardrails

Deploy stress-testing (IBM ART / MS Counterfit).

DPIA

Assess inference-based privacy risks.

Supply Chain

Audit 3rd party providers for PQC readiness.

Monitoring

Automate Model Drift and safety checks.

Culture

Embed ethical awareness in the R&D lifecycle.

"Are we building a tool—or a trap?"

Security is the foundation, Privacy is the boundary, and Governance is the steering wheel.

Trust is the ultimate algorithm.

Bilge Baykurt

MSc Cybersecurity | Academic Edition © 2026